Chaos V-Ray 7 for 3ds Max now features AI-driven tools for materials creation and automated scene enhancement, shifting scenes from day to night and exchanging feedback via the cloud.

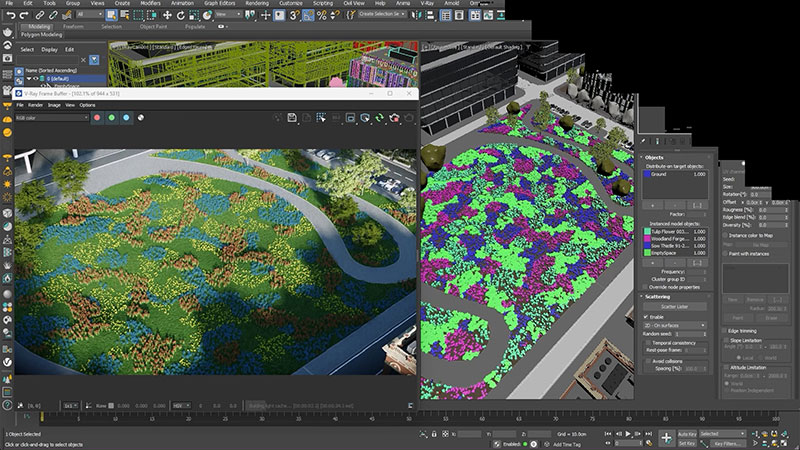

Scatter clustering

Chaos V-Ray 7 for 3ds Max now has over a dozen new features following the release of Update 2. The updates include an AI-driven tool that can create materials from photography, and another that can automatically enhance and upscale scenes.

Developed for 3D artists and designers in various industries, the update supports all stages of creative production, from lighting night skies to avoiding typical technical limitations when sharing scenes. Users now have more control and flexibility while managing pipelines.

Allan Poore, chief product officer of Chaos, said that Chaos Group’s intention has been to use AI to develop options that address specific user challenges. For example, the AI Material Generator and AI Enhancer can be used to build scenes faster and easier," he said. "Adding new AI tools and better collaborative options through the Cloud, to speed up and improve the process from iteration to presentation, will make it easier to achieve photorealism with less manual effort.

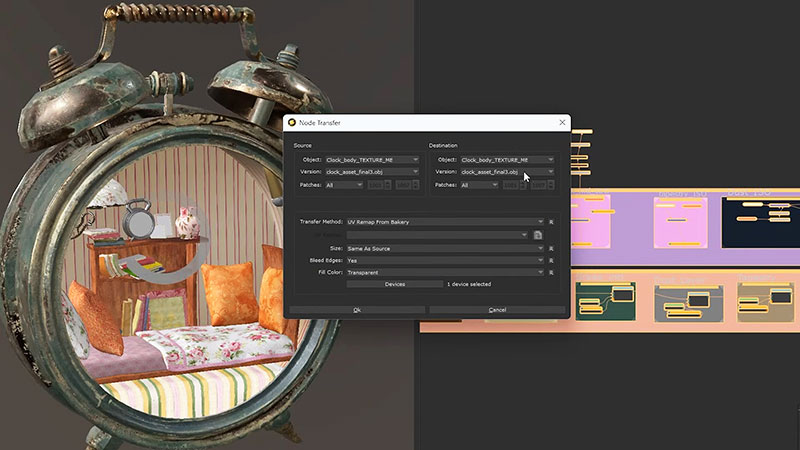

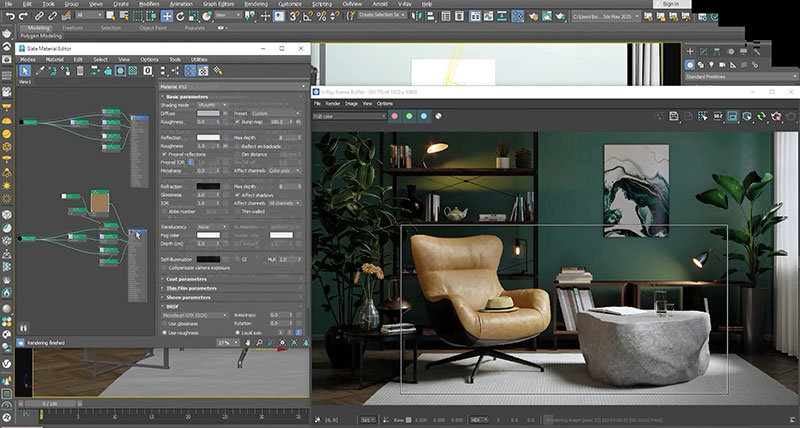

Material generation

The AI-powered Chaos AI Enhancer automatically identifies and improves people, vegetation and other focal areas in renders. The Chaos AI Material Generator gives users the ability to create their own materials using photographs. Once an image has been uploaded into the Chaos Cosmos asset library, accessed from inside V-Ray, the AI Material Generator creates a PBR material and texture maps, which can then be used to quickly complete a full environment.

Both tools are currently in beta and will continue to receive updates based on user feedback.

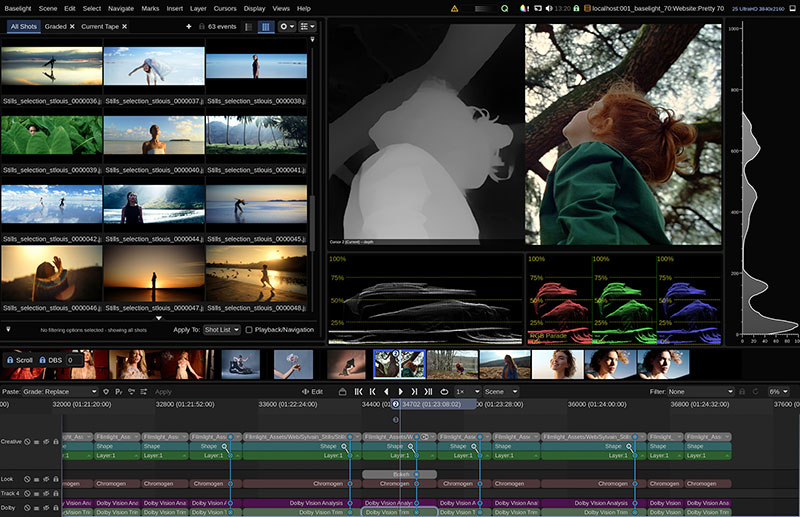

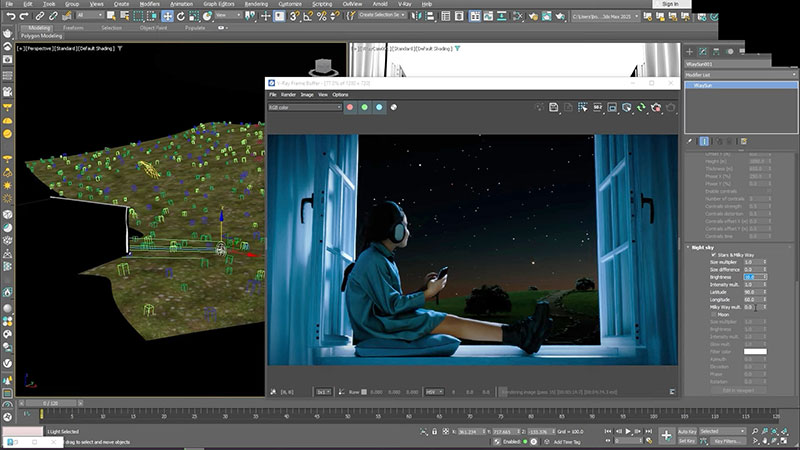

Night and Day

To further improve the look of a project, artists working V-Ray for 3ds Max can shift a daylight scene to evening and populate the sky with stars that are astronomically accurate. The new Night Sky options add constellations, phases of the moon and even the full Milky Way. All of these will be positioned to accurately reflect chosen dates and geographic locations. Other options related to the position, phase and other aspects of the sun node, give users further creative choices.

Moving from evening to night.

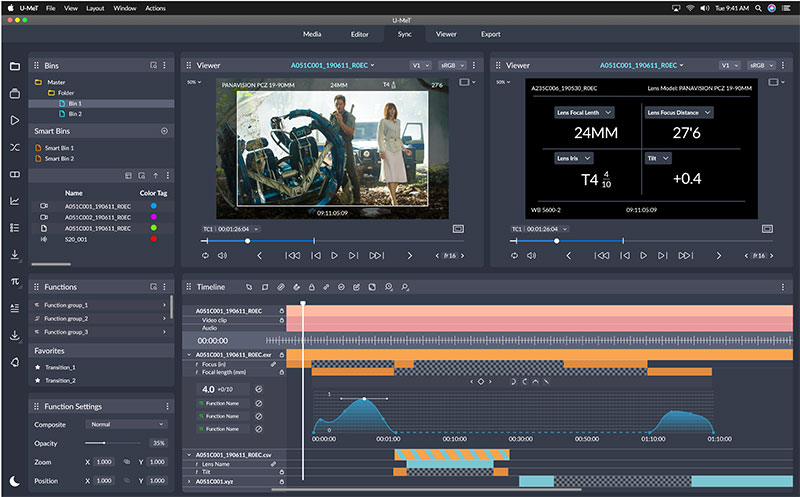

Splat Clipping and Scatter Clustering

Update 2 also adds a new Gaussian Splats clipping feature to trim 3D splats directly within 3ds Max. Gaussian splatting is useful for its combination of realism, efficiency and speed. However, now being able to trim the splat results to only what is needed for a scene, ahead of rendering while still in 3ds Max, increases efficiency further, especially in terms of memory resources.

New scatter clustering allows the user to group various scattered objects automatically, according to three modes. Generate mode creates clusters based on noise calculations. A colour map mode allows you to choose which objects should appear within the different colours shown on the map, and Layer paint mode defines specifically which objects belong to a particular layer and paints those layers directly into 3ds Max.

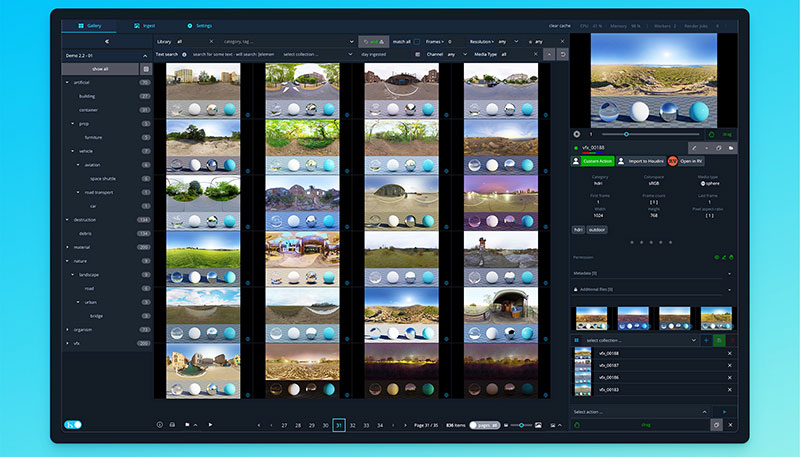

Cloud Collaboration Beta

When projects are ready to share, users now have Chaos Cloud 3D Streaming, a new feature that helps collaborate more effectively. After uploading a scene, clients and project collaborators can access the 3D model remotely on a computer or a mobile device via a URL and provide feedback without requiring extra hardware or tools.

Anyone, regardless of their skill level, can place a comment or a pin, making it easier to accept feedback and iterate changes as needed. The Chaos Cloud 3D Streaming is currently in beta and open to all users, with new features coming in the next few months.

Cloud GPU Rendering

Along with the new features, Chaos Cloud GPU rendering has undergone recent improvements to its infrastructure, increasing speed by up to three times, according to Chaos. It will now also be possible to use local V-Ray GPU for Gaussian Splats rendering, and to render the new night sky options, AI-generated materials and V-Ray Luminaire. GPU Caustics include new support for dispersion and better memory usage and performance.

Efficiency Upgrades

Some convenient new features are added to help users work more efficiently, for example, importing multiple assets at once, and an option in the Denoiser Workflow to remove the extra denoised data from files before saving, reducing their size. Support for the OpenPBR shading model gives consistent results across compatible applications.

Distributed rendering

The new Distributed Rendering 2 system improves on performance scaling, and progressive rendering achieves better scaling with multiple machines. With VRayProxy Viewport Performance optimisation, loading is delayed so that artists can start working immediately while assets continue to load in the background.

Using LightMix, it is now possible to control up to 256 unique light selects to control multiple lights, and to edit them individually or as a group, adjusting the intensity and colour of lights. Further, with new sliders for light tones, dark tones and shadows, users have layer controls for adjusting tone mapping and exposure.

V-Ray now has a Two-Sided Texture used to assign different maps to front and back surfaces for labels, fabrics or paper-based materials. A new ability to apply a mapping source to the MultisubTex sub-maps gives faster control over all sub textures, and simpler tree structures in the material editor. Users can also use a probability column in V-Ray Multisub Textures to change the probability of the appearance of different textures and/or colours in the Multisub tex, increasing or decreasing the amount of a specific texture to create more texture variants, more easily. www.chaos.com/vray