Filmmakers Steve Jelley and Annalise Davis talk about using virtual production to make the most of scale, VFX and drama in their film, engage their audience and keep to an indie budget.

Steve Jelley, the co-founder and joint managing director of Dimension Studio, and co-producer Annalise Davis worked together on the recent disaster film ‘No Way Up’, an independent production from Altitude Films. The story combines a mid-ocean airplane crash and an aggressive shark into a survival story, taking viewers – as well as the characters – from tens of thousands of feet above sea level to the ocean floor.

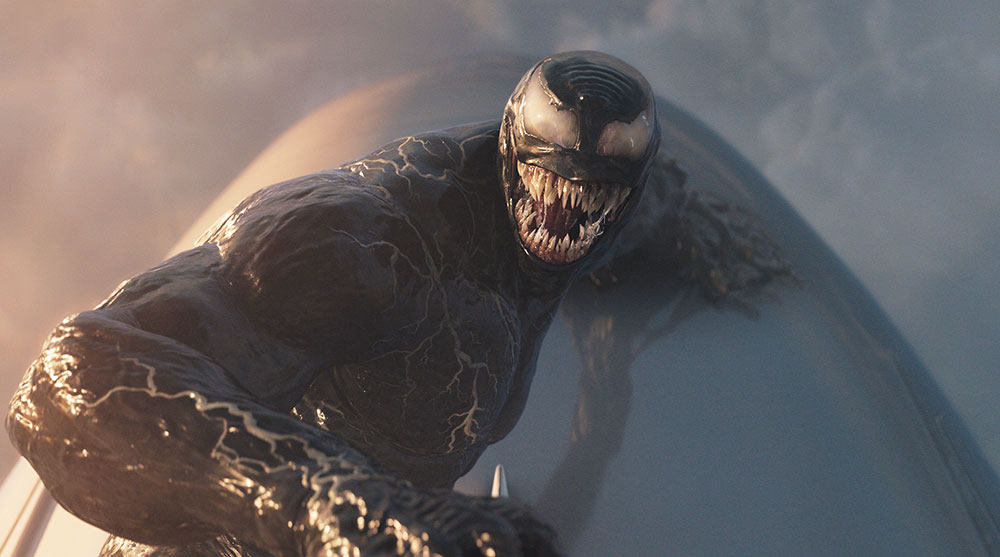

It’s a sensational story. A jet airliner crashes into the sea and, with a handful of survivors trapped in an airlock in the fuselage, sinks to the bottom where it balances on the edge of a cliff above a dark trench. A shark appears, the air supply rapidly dwindles and the passengers are forced to rely on their courage, ingenuity and each other to stay alive. Don’t worry – three of them do survive, including the lead character. But, as the final sequence closes in, the filmmakers faced an interesting challenge.

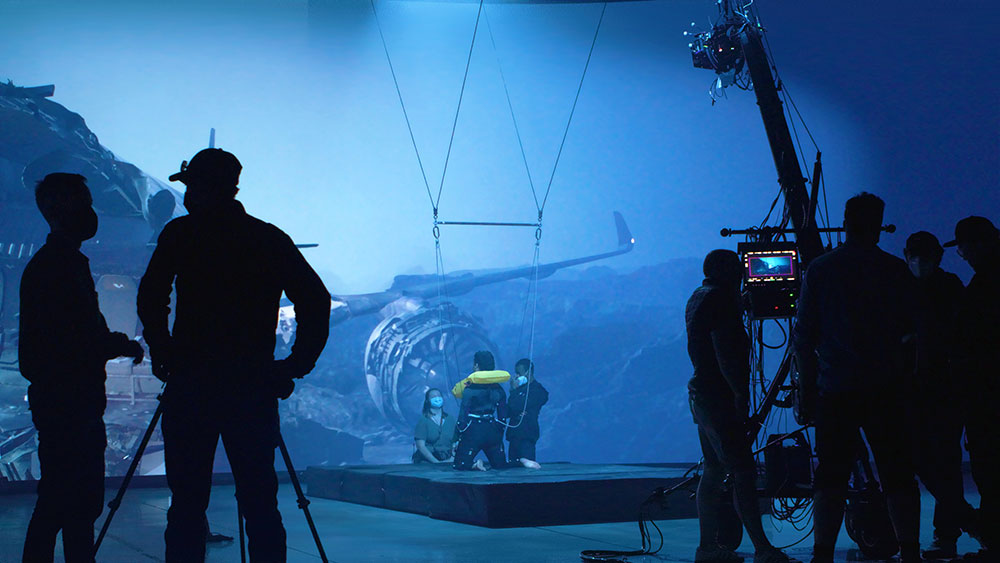

The filmmakers knew they had to bring the heroine to the surface while suspending the audience’s disbelief, and wanted to make the most of the extreme, contrasting environments the characters find themselves in. They also had to keep an eye on the production’s budget. They chose to create the sequence using virtual production in a dry-for-wet LED volume.

Continuity, Consistency and Storytelling

Steve Jelley’s company Dimension Studio specialises in volumetric, 3D capture and virtual productions. “Our decision to use virtual production relates first of all to storytelling, combined with the fact that ‘No Way Up’ is an independent feature film,” he said. “Using VP would allow us to avoid relying too heavily on visual effects, saving time and money. It also alleviated concern about continuity and consistency across the film.”

On the one hand, this movie was always going to be a VFX heavy project. Between the shark, the plane crash and the underwater environment – including underwater bubbles that became a key story point – a significant part of the story’s success would rely on the effects and how well they matched the main unit’s live action footage. In fact, most of the film was shot practically in a water tank, with various sequences shot dry and finished with VFX.

Using virtual production (VP) would allow them to continue filming practically through the final scene, with a film crew familiar with the overall look, and continue using the same anamorphic lenses that Annalise and the DP Andrew Rodger had chosen for the project.

They could transfer the DP’s skill at handling the practical underwater look and environment, to their dry-for-wet shoot. Equally important, they would be able to take advantage of the lead actress’ live action performance on the dry stage.

Everything On Screen

Steve and Annalise valued the ability that VP gave them to put all of their story elements on screen with high enough production values to pull the audience in. Annalise said, “With Dimension’s VP team, we could generate the contrast in scale we wanted in-camera, moving from the claustrophobic airplane interior out into the expanse of the open ocean. We could also visualise the character’s story by capturing her as a small figure against the massive, sunken plane exterior, projected onto the LED walls.”

Steve said, “There was also another practical reason for choosing to work with VP for the final sequence. As our character tries to swim to the surface, she runs out of air and we think we have lost her. But her life vest pulls her up to the surface regardless, and she manages to survive.

“To create this with traditional techniques, you would either have to cut together a long sequence from multiple shots, or a build a long VFX sequence,” Steve said. “Instead, we had her ‘swimming’ on a wire on a dry set in front of projections on LED screens, while shooting very long takes of nearly 20 minutes, and in the end needed very few cuts, elongating the action in time and space. This was the director Claudio Fäh’s vision for the end of the film, which we achieved.”

Virtual Expertise

Steven and Annalise first worked together on director Paul Franklin’s short film ‘Fireworks’, a virtual production completed during lockdown in 2020. In those pioneering days of VP in the UK, they built their own LED wall. From that project, Dimension Studio has continued to work on other virtual productions, including Robert Zemekis’ ‘Pinocchio’ for Disney. Steve also worked on ‘Masters of the Air’ for Apple TV. Then, two years ago in 2022, Dimension shot ‘No Way Up’ at their studio in London.

Steve said, “We’re now moving to a new, larger facility and meanwhile have been working on another virtual production at Cinecittá Studios in Rome with Roland Emmerich on the forthcoming TV series ‘Those About to Die’.

‘No Way Up’ became one of the first projects to use VP for a dry-for-wet shoot. The complex on-set workflow needed a generous amount of planning time, and collaboration between the production team and other teams that may not have been necessary in the past. Crane moves and animation paths had to be determined to result in the desired scale in the environments. The large visual effects component of the movie needed detailed visualisation of its own, and Annalise’s and the DP Andrew Rodger’s decision to shoot with anamorphic lenses needed to be planned for as well.

In Pre Production with Unreal Engine

Dimension’s artists worked with Claudio to explore options and goals for all the underwater sequences, and build out the scenes in Unreal Engine. They built a model in Unreal of the crashed airplane to plot its continuous descent toward the trench at sea bottom, and considered camera angles, lighting and mood, taking the opportunity for interative exploration in the software without the cost of committing to any versions. The process led to very detailed previs and techvis, not just for the VP sections but also the shots they needed to cut into, especially the VFX shots.

For instance, the plane crash was staged and shot by Shoot Aviation in Maidenhead, who specialise in authentic aviation footage for cinema. Annalise said, “From there, the production submerged a physical, modular airplane set in a water tank, with the cast performing inside, for the plane interior sequences. A very complex rig was needed because the angle of the plane was essential to the story – as events proceed, the plane continues to descend along the slope of the trench, calling for the angle to change from one scene to the next at different depths.”

It took a lot of work, but because the majority of the film had been shot in the water tank, both the VP dry-for-wet footage and the VFX shots had a real, grounded reference to match to. Claudio’s early decisions regarding mood, lighting and looks persisted because of the practical start point and attention to matching and integration. “As it is in any project, that was the main VFX challenge – matching the physical reality (in this case, underwater) with a digital creation,” said Steve.

“The airplane model that we projected on the LED screens on the VP set was a VFX asset, and was turned over to the vendors along with the Unreal scene, giving those teams all of the necessary reference. While Unreal isn’t the same as a VFX pipeline, it served as an accurate way to communicate the world scale, creative intent and lighting in proxy format.” That information, along with the consistent airplane asset, added up to the critical set-to-post transfer they needed, resulting in beautiful visual effects work from VFX supervisor Philip Nauck and the artists of vendors including Cyclo VFX, Stage 23 and others.

Anamorphic

Aiming for a feeling of scale and a cinematic look, Annalise and Andrew Rodger to DP wanted to shoot anamorphically, choosing full frame Caldwell Chameleon lenses. The DP also wanted to avoid keeping the background too clearly in focus, for a more claustrophobic effect. Steve mentioned that anamorphic lenses are sometimes avoided for virtual productions, but has found ways of managing the images while still capturing the attractive lens flares and classic film look.

“The difficulty comes with the virtual depth of field,” he said. “Sometimes your focal plane needs to be in virtual space – that is, beyond the wall. It’s certainly possible to make that work but you must deal with the anamorphic bokeh (out-of-focus areas) and optical effects at the edges of the frame. These can’t be replicated through a standard Gaussian blur – deliberate blurring in selected areas – in order to achieve a virtual focus plane. To work around the issue, it’s important to keep control of the focus plane and keep the wall itself more in focus – which is contrary to the convention of defocussing for VP. Higher resolution content is also needed to support that level of focus.

“It’s also important to consider how much of the wall is in the camera frustum – the field of view. A smaller frustum is easier to work with, but when you have the whole wall in view, as we did on the dry-for-wet volume with the anamorphic lens, you need to be sure your render power and screens can deliver. Our volume was built with Roe Black Pearl 2 screens, which we rendered in 8K.”

Practical Workflow

Of the 25 day shoot, 23 days were shot practically and two days were spent in the LED volume capturing the dry-for-wet final sequence, which resulted in 10 minutes of screen time. Steve talked about managing the shoot on the stage. “The actress performed on a wire, giving us swimming sequences that were as long as possible, as mentioned, to avoid a final sequence that was full of cuts. It was quite hard work for her physically, and she did a wonderful job.

“We tracked everything. Because she was wearing a wet suit, we could give her a full set of tracking markers. We also tracked the camera, of course, which was on a crane. Tracking meant we knew where all elements were at each moment, and that meant we could trigger the airplane imagery to pass behind her at just the right angle and just the right time.” The lighting was especially crucial here, for drama, for realism and to communicate change of depth and match principal photography. In fact, the airplane’s continuous descent made lighting a large part of the look throughout the story, both inside and outside the fuselage, starting on the ground and then continually changing as the plane sinks further underwater.

Steve also noted, “This wasn’t a motion controlled shoot – the cameras moves had to be handled on the fly to ensure our timing was right. We also needed responsive talent and technicians to keep the sequence moving and coordinated. As well as the VFX supervisors working on set with us, we had one virtual production supervisor on the floor with the camera team, and another overseeing projection of the Unreal / background imagery for the shoot.”

Ultimately, those early Unreal previs sequences helped with camera moves but, given so many moving parts, a level of improvisation was needed. Fortunately, Unreal both powers the background and allows near-to-live changes. As Annalise commented, “Techniques aren’t what lead the filmmaking process. Story and characters need to be allowed to do that.”

Potential

The physical reality of moving the camera, the performers and the virtual background can demand changes at any time. Steve estimates about 10 people were on set at a time forming the VP crew, outside of the main unit crew, to ensure the shoot came together and that the DP could capture what was on set in front of him.

So, virtual production was not chosen as an easy option for this project but as an enabling technique for an independent production to realise studio-level quality on screen. “Filmmakers are now ready, with ideas and talent, and need accessible methods,” Steve said.

“Virtual production can open up settings and scales for films from underwater to fantasy to historical. It can help move some of the traditionally post-production elements of VFX onto the set, placing them in the hands of the producers, like us, and the camera crew. Among other factors, it improves continuity in the story and consistency throughout the effects themselves, and helps to preserve the critical conversations between the director, DP and designers.” dimensionstudio.co