DMC’s remote sports production workflow at the Open was a flexible set-up based on AMPP, with cloud tools for replays, switching and mixing, relaying signals to Oslo for processing.

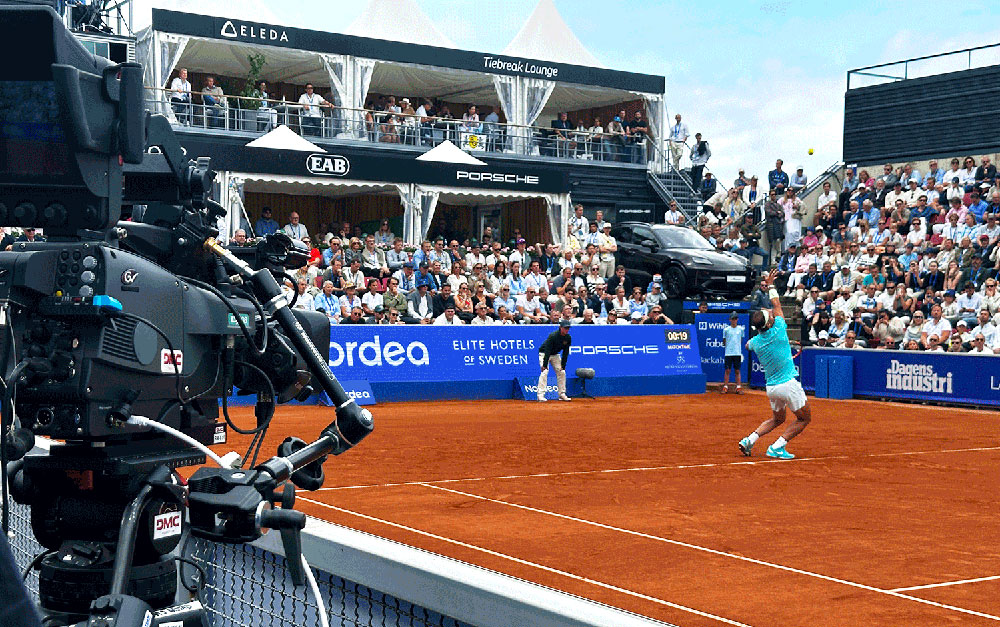

DMC Production has implemented an effective new remote production workflow for the 2024 Nordea Open tennis tournament, held 8 to 21 July at Båstad Tennis Stadium in Sweden. The new approach and setup significantly improve on the efficiency and economy of DMC’s sports broadcasts.

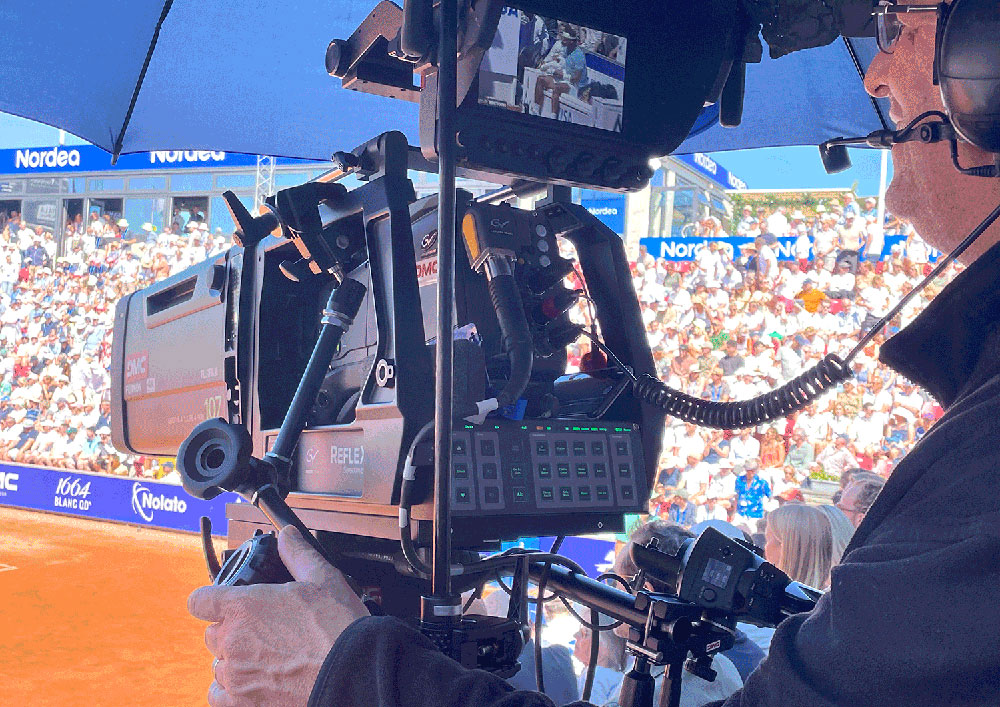

Based on Grass Valley AMPP, DMC has refined its production workflow by processing all signals on-site, a substantial shift from the previous method of transporting signals to an off-site location for processing. Starting with capture using six Grass Valley LDX 98 cameras, the production deployed cloud-based LiveTouch X replay systems, Audiomix X audiomixer and a Maverik X production switcher, running on the AMPP platform.

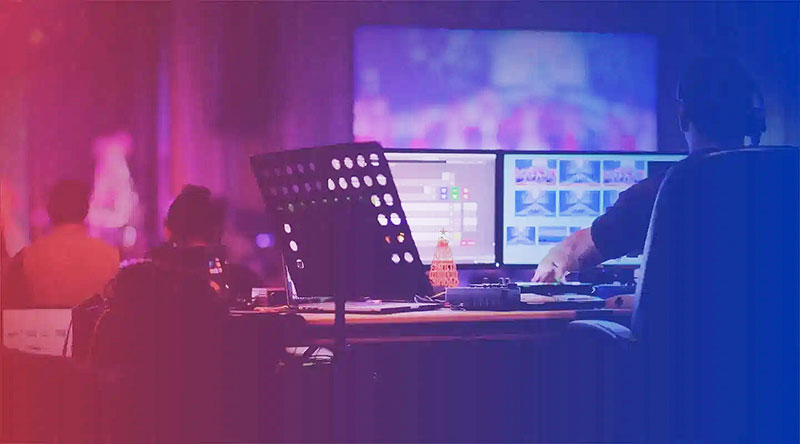

Distributed Environment

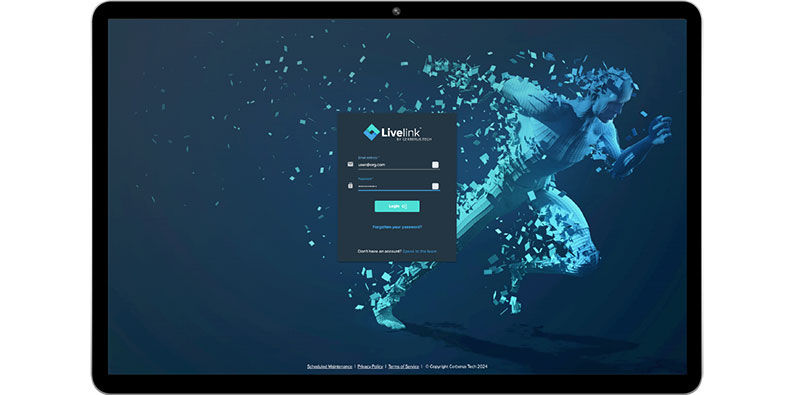

The LiveTouch X replay system, for instance, is software that can be remotely accessed as a service through devices with browsers connected over the internet. Its UI has a familiar design with soft controls for variable speed playback, shuttle, trim, page, bank, slot, playlist creation and similar replay functionality. The operator selects ingest and playout channels and edits highlights in the cloud. Owing to the integrated nature of GV AMPP, multiple operators in the production centre can access playlists and clips of other operators, creating a very efficient, distributed replay environment.

LiveTouch X consists of an ingest application, and a playback application. Each operator’s Replay Operator application can see the record channels from multiple ingest applications. This arrangement makes record channel sharing very flexible, allows full replay functionality and supports multiple embedded real-time monitoring screens.

“Last year, we carried all the signals from the venue to Oslo for processing, which required significant bandwidth and logistical effort," said Johan Hedblom, MD – DMC Sweden. "This year, by processing everything on-site using AMPP, we only need to carry a single world feed signal out from the venue. This not only reduces bandwidth requirements but also simplifies the overall setup."

Switching and Mixing

Maverik X, an AMPP-native switcher, is purpose-built and scalable, meeting user requirements through microservices utilizing COTS servers. Acquiring resources very quickly, its AMPP apps make many options available for M/Es, key layers and transitions. An API is included for detailed integration with hardware surfaces, for each function.

Audio Mix X is designed on similar principles – an instantly recognisable software UI, access to any source on the AMPP network and a monitor window located either directly on the audio mixer UI or on a larger screen. Various mixing, grouping and mix-minus workflows adapt Audio Mix X to different applications.

The mixing system is equipped with gain, full equalizer, compression, aux channels, pre-faders, subgroups and monitoring outputs, and fast ramping avoids abrupt changes in levels when clicking to a new position on the fader. When used with a hardware panel, the interaction between hardware and software has no perceptible user delay.

Norway, Stockholm, London

The new workflow has reduced the need for on-site staff and equipment by a large margin. Only six crew members, including four cameramen, an assistant engineer and a broadcast coordinator, are required at the venue. Camera control and shading are managed remotely from Norway, while the director, sound engineer and replay operator work from DMC's facilities in Stockholm. For the world feed, ATP Media add international commentary and graphics via London.

Keeping staff at their usual workplaces and minimising the transportation of physical equipment have resulted in major environmental and economic advantages for DMC. The crew's feedback from the Nordea Open has been positive as well, noting the ease of setup and management compared to previous years.

This implementation has led to a trend for future events as well, including the upcoming CEV Eurobeach Volley in the Netherlands, coming up 13-18 August, where the AMPP workflow will produce the competition on one court, the Swedish Padel Championship and the PBA Bowling Tournament in Sweden on 1 September.

"The difference this year is remarkable," said Johan Hedblom. "Through this approach, we have developed a new revenue stream at DMC, undertaking events like the Padel championship and PBA Bowling for the first time. We are eager to apply these new methods to other events and continue improving our workflows." www.grassvalley.com